Don’t we all love our backups? We all have them. Some of us have backups done poorly, and some of us worry that ours is still not as good as we would like. Few have it nailed. We don’t have it nailed…

Here at EXPLOINSIGHTS, Inc. we think we are in the second camp (“not as good as we would like it to be”). We have a ton of backups of our data, much of it offline (totally safe from e.g. malware), and some of those are in different locations (protected against theft, fire, flood, mayhem AND hackers), but they would all require a lot of work to get going if we ever needed them. So, if we suffer a disaster (office burned to ground or swallowed up by a Tornado or house stolen by Hillary Clinton’s Russian Hackers), then rebuilding our system would still take time. What we ALL want, but can seldom get, is a live backup that runs in parallel to our existing system. Like a hidden ghost system that mirrors every change we make, without being exposed to the hazards of users and such.

So hold that thought…

Onto our favourite Linux Ubuntu capability: LXC. LXC has a capability of basically exporting (copying or moving) containers from one machine to another – LIVE. This means you don’t have to stop a container to take a backup copy of it and place it on ANOTHER MACHINE. Theoretically, this is similar to taking a copy of a stopped container but without the drag of stopping it.

We know it has to be a pretty complicated under the hood for this to work, and it’s evident it’s not really intended for a production environment, but we are going to play with this a little to see if we can use live migration to give us full working copies of our system servers on another machine. And if we can, to place that machine not on the LAN, but on the WAN.

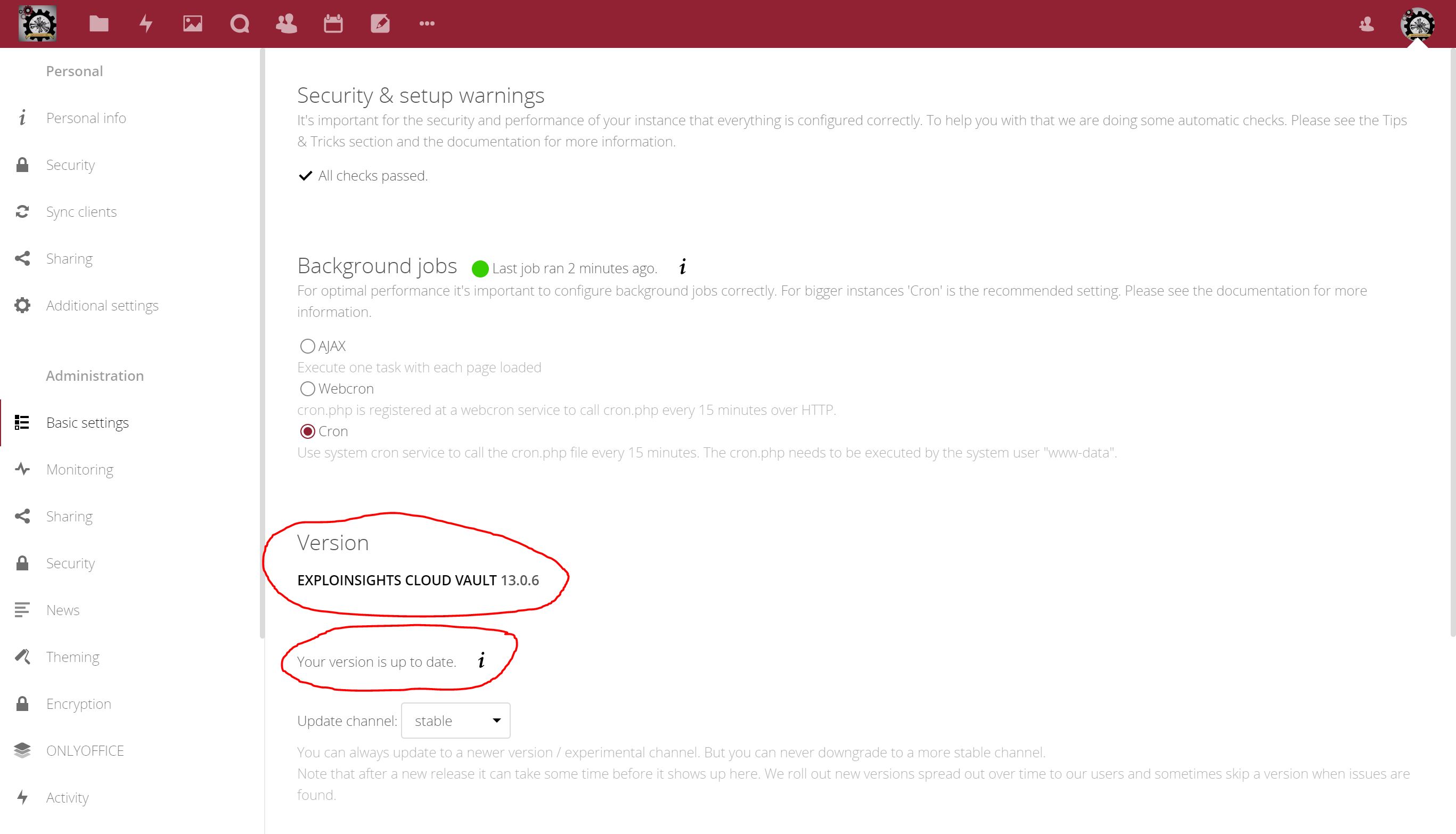

Our largest container is our Nextcloud instance. We have multiple users, with all kinds of end-to-end encrypted AND simultaneously server-side encrypted data in multiple accounts. All stored in one CONVENIENT container. We are confident it’s SECURE from theft – we have tried to hack it. All the useful data are encrypted. But the container is growing. Today it stands at about 138 GB. Stopping that container and copying it even over the LAN is a slow process. And that container is DOWN at that time. If a user (or worse, a customer), tries to access the container to get a file, all they see is “server is down” in their browser. #NotUseful

So for this reason, we don’t like to “copy” our containers – we hate the downtime risk.

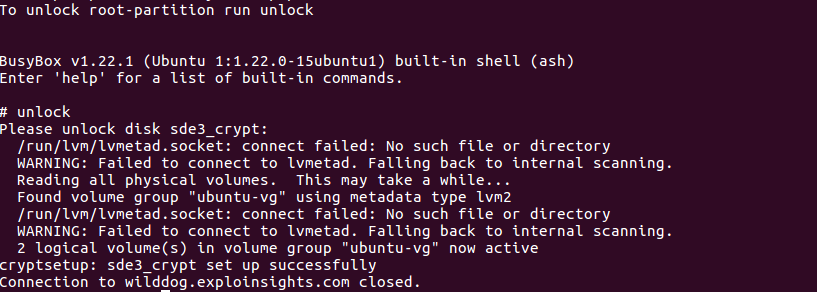

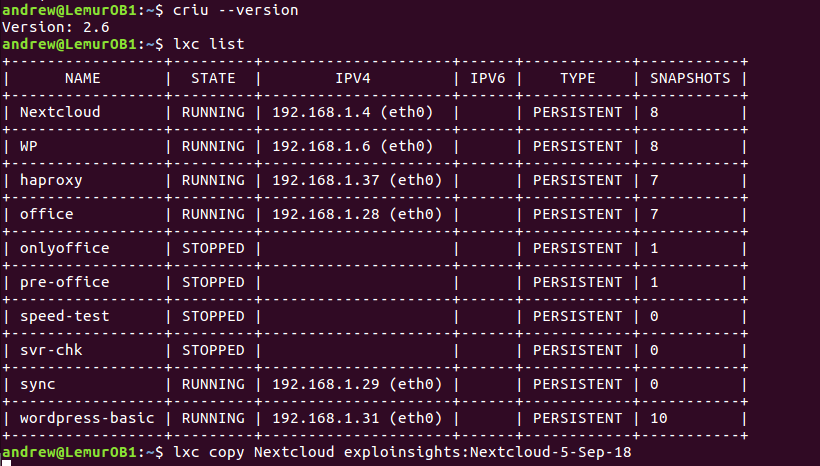

So….we are going to play with live copying. We have installed criu on two of our servers, and we are doing LAN-based experiments. It’ll take time, as we have to copy-and-test. Copy-and-test. We have to make sure all accounts can be accessed AND that all data (double encrypted at rest, triple if you count the full-disk-encryption; quadruple if you count the https:// transport mechanism for copying) can be accessed without one byte of corruption.

Let the FUN begin. We have written this short article as the first trial is underway. We have our 138GB container on our “OB1” container (a Star Wars throwback):

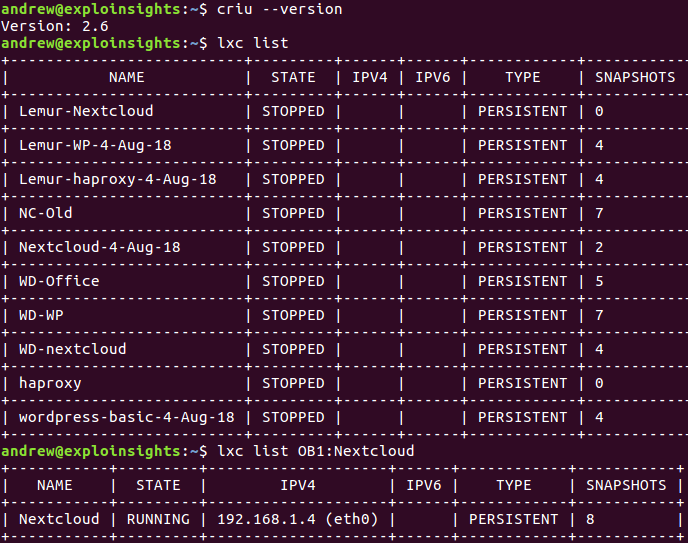

See the last entry? We are copying the RUNNING container to our second server (boringly called ‘exploinsights’). It’s a big file, even for our super fast LAN router, and it’s not there even now:

The image has not yet appeared, but we have confirmed it’s still up ‘n running on the OB1 host.

Lots of testing to do, and clearly files this large can’t be backed up easily over the internet, so this is definitely an “as-well-as” non-routine option for a machine-to-machine back up, but we like the concept of this, so we are spending calories exploring it.

#StayTuned for an update. Also, please let us know what you think of this – drop us an email at:

UPDATE:

I need a good Plan B: Thecopy failed after a delay of about two hours. It would not take that long to copy, so something is broken, and we are not going to try to dig our way out of that hole. LXC live migration died on the day we tried it. #RIP

So when we first set-up our remote linux logins, we used the standard SSH port # (22). We use public keys for our SSH logins, so we weren’t especially worried about port scanners and bot attacks,

So when we first set-up our remote linux logins, we used the standard SSH port # (22). We use public keys for our SSH logins, so we weren’t especially worried about port scanners and bot attacks,