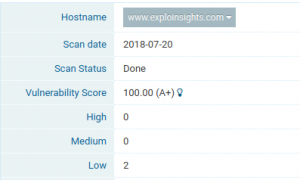

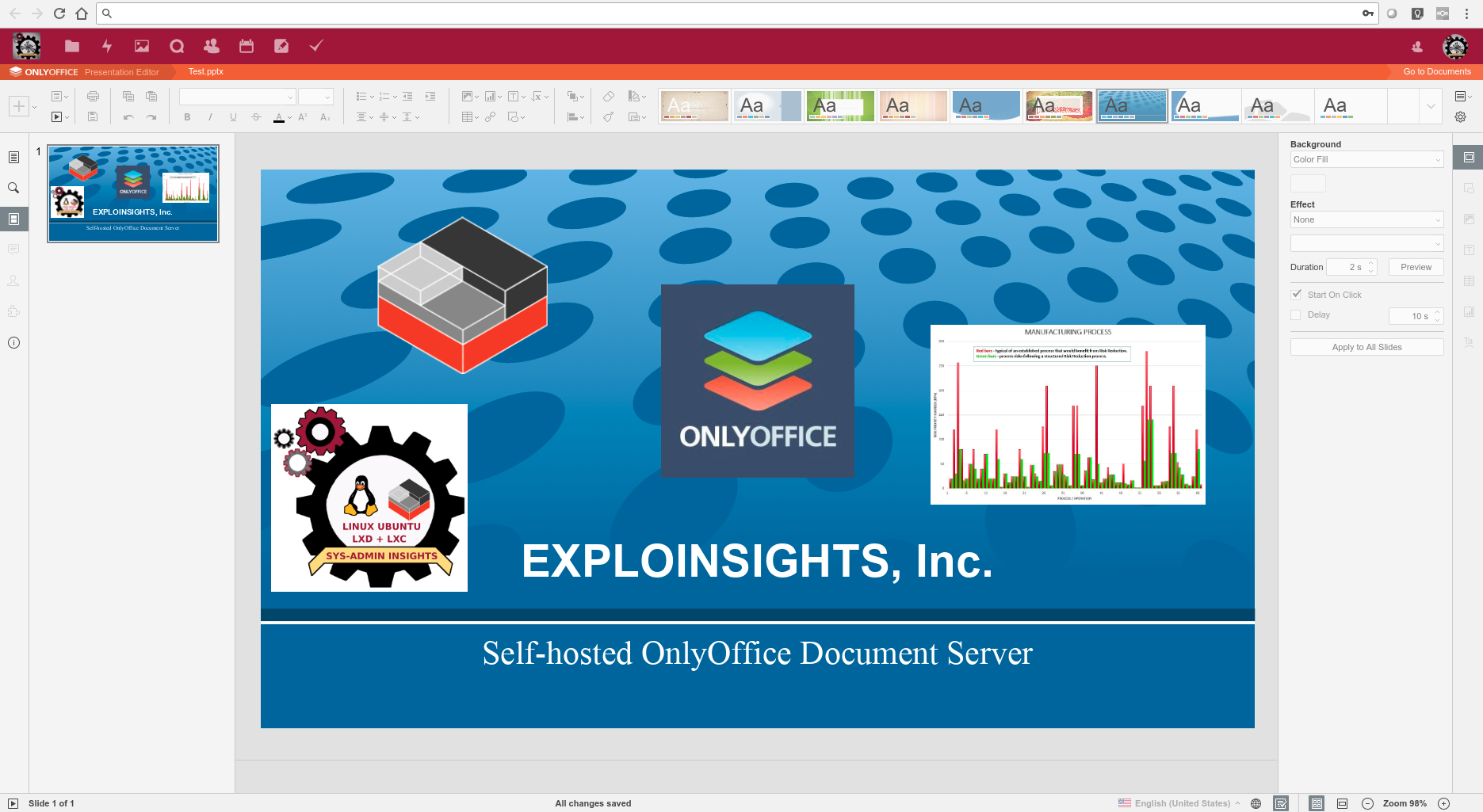

In our quest to migrate away from the relentlessly privacy-mining Microsoft products, we have discovered ‘OnlyOffice’ – a very Microsoft-compatible document editing suite. Onlyoffice have Desktop and server-based versions, including an Open Source self-hosted version, which scratches a LOT of itches for Exploinsights, Inc for NIST-800-171 compliance and data-residency requirements.

In our quest to migrate away from the relentlessly privacy-mining Microsoft products, we have discovered ‘OnlyOffice’ – a very Microsoft-compatible document editing suite. Onlyoffice have Desktop and server-based versions, including an Open Source self-hosted version, which scratches a LOT of itches for Exploinsights, Inc for NIST-800-171 compliance and data-residency requirements.

If you’ve ever tried to install the open-source self-hosted OnlyOffice document server (e.g. using the official installation instructions here) you may find it’s not as simple as you’d like. Firstly, per the official instructions, the onlyoffice server needs to be installed on a separate machine. You can of course use a dedicated server, but we found that for our application, this is a poor use of resources as our usage is relatively low (so why have a physical machine sitting idly for most of the time?). If you try to install onlyoffice on a machine with other services to try to better utilise your hardware, you can quickly find all kinds of conflicts, as the onlyoffice server uses multiple services to function and things can get messed up very quickly, breaking a LOT of functionality on what could well be a critical asset you were using (before you broke it!).

Clearly, a good compromise is to use a Virtual Machine – and we like those a  LOT here at Exploinsights, Inc. Our preferred form of virtualisation is LXD/LXC because of performance – LXC is blindingly fast, so it minimizes user-experience lag issues. There is however no official documentation for installing onlyoffice in an lxc container, and although it turns out to be not straightforward, it IS possible – and quite easy once you work through the issues.

LOT here at Exploinsights, Inc. Our preferred form of virtualisation is LXD/LXC because of performance – LXC is blindingly fast, so it minimizes user-experience lag issues. There is however no official documentation for installing onlyoffice in an lxc container, and although it turns out to be not straightforward, it IS possible – and quite easy once you work through the issues.

This article is to help guide those who want to install onlyoffice document server in an LXC container, running under Ubuntu 16.04. We have this running on a System76 Lemur Laptop. The onlyoffice service is resource heavy, so you need a good supply of memory, cpu power and disk space. We assume you have these covered. For the record, the base OS we are running our lxc containers in is Ubuntu 16.04 server.

Pre-requisites:

You need a dns name for this service – a subdomain of your main url is fine. So if you own “mybusiness.com”, a good server name could be “onlyoffice.mybusiness.com”. Obviously you need dns records to point to the server we are about to create. Also, your network router or reverse proxy needs to be configured to direct traffic for ports 80 and 443 to your soon-to-be-created onlyoffice server.

Instructions:

Create and launch a container, then enter the container to execute commands:

lxc launch ubuntu:16.04 onlyoffice

lxc exec onlyoffice bash

Now let’s start the installation. Firstly, a mandatory update (follow any prompts that ask permission to install update(s)):

apt update && apt upgrade && apt autoremove

Then restart the container to make sure all changes take effect:

exit #Leave the container

lxc restart onlyoffice #Restart it

lxc exec onlyoffice bash #Re-enter the container

Now, we must add an entry to the /etc/hosts file (lxc should really do this for us, but it doesn’t, and only office will not work unless we do this):

nano /etc/hosts #edit the file

Adjust your file to change from something like this:

127.0.0.1 localhost

# The following lines are desirable for IPv6 capable hosts

::1 ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

ff02::3 ip6-allhosts

To (see bold entry):

127.0.0.1 localhost

127.0.1.1 onlyoffice.mybusiness.com

# The following lines are desirable for IPv6 capable hosts

::1 ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

ff02::3 ip6-allhosts

save and quit. Now we install postgresql:

apt install postgresql

Now we have to do somethings a little differently than at a regular command line because we operate as a root user in lxc. So we can create the database using these commands:

su - postgres

Then type:

psql

CREATE DATABASE onlyoffice;

CREATE USER onlyoffice WITH password 'onlyoffice';

GRANT ALL privileges ON DATABASE onlyoffice TO onlyoffice;

\q

exit

We should now have a database created and ready for use. Now this:

curl -sL https://deb.nodesource.com/setup_6.x | bash -

apt install nodejs

apt install redis-server rabbitmq-server

echo "deb http://download.onlyoffice.com/repo/debian squeeze main" | tee /etc/apt/sources.list.d/onlyoffice.list

apt-key adv --keyserver hkp://keyserver.ubuntu.com:80 --recv-keys CB2DE8E5

apt update

We are now ready to install the document server. This is an EXCELLENT TIME to take a snapshot of the lxc container:

exit

lxc snapshot onlyoffice pre-server-install

This creates a snapshot that we can EASILY restore another day. And sadly, we probably have to as we have yet to find a way of UPDATING an existing document-server instance, so whenever onlyoffice release an update, we repeat the installation from this point forward after restoring the container configuration.

Let’s continue with the installation:

apt install onlyoffice-documentserver

You will be asked to enter the credentials for the database during the install. Type the following and press enter:

onlyoffice

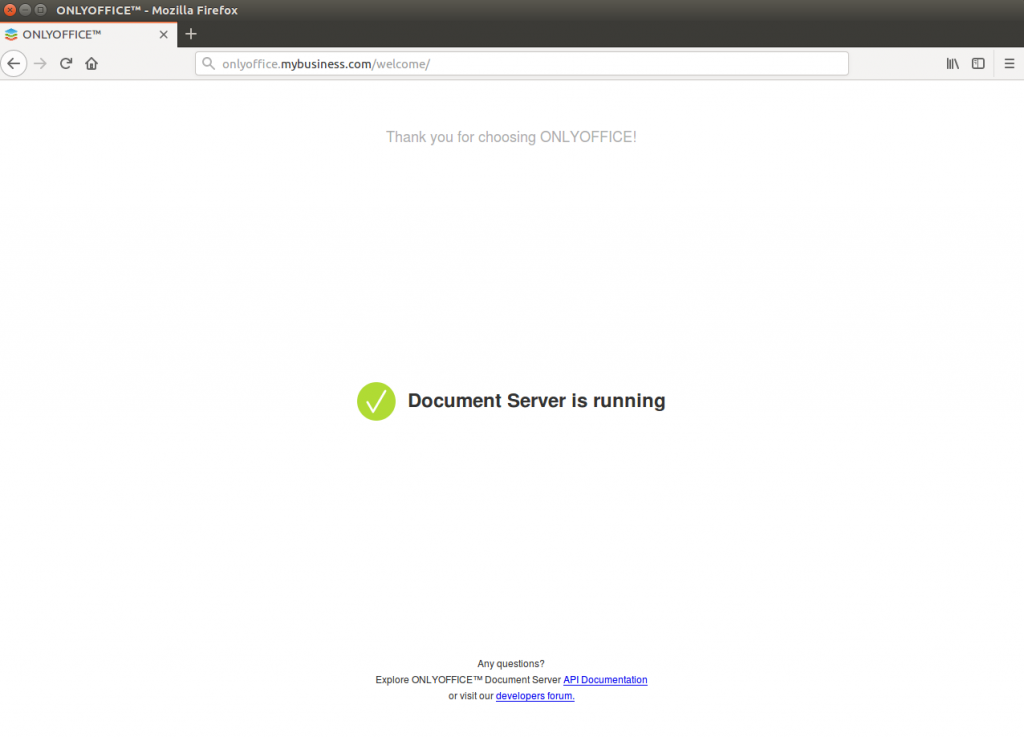

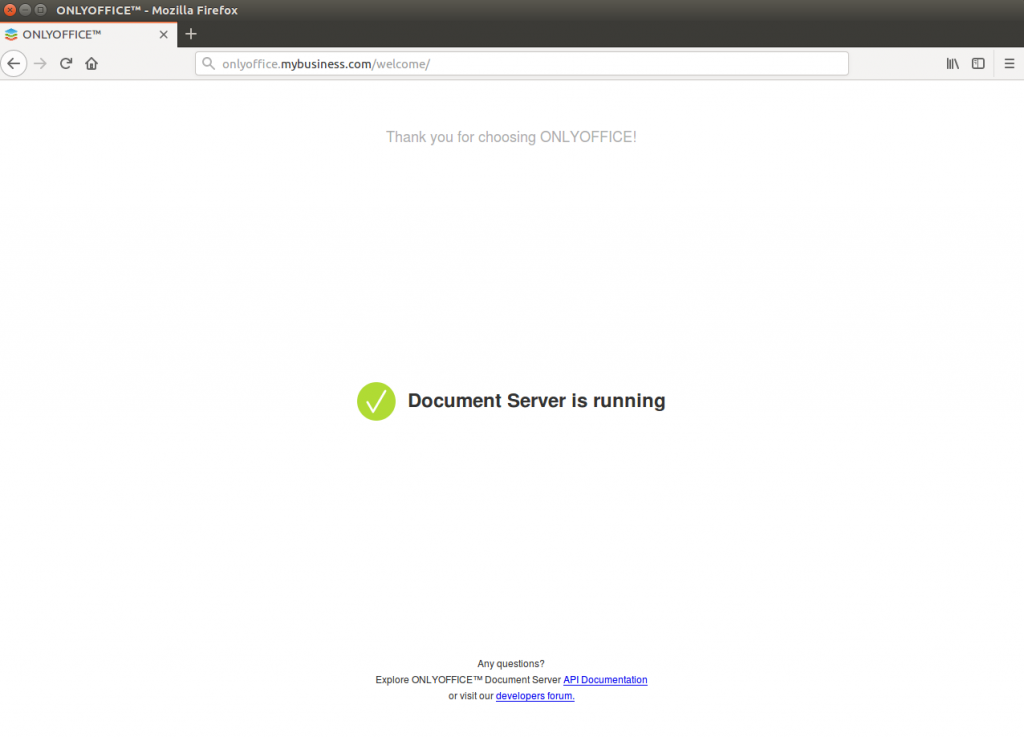

Once this is done, if you access your web site (i.e. your version of ‘www.onlyoffice.mybusiness.com’) you should see the following screen:

We now have a document server running, albeit in http mode only. This is not good enough, we need to use SSL/TLS to make our server safe from eavesdroppers. There’s a FREE way to do this using the EXCELLENT LetsEncrypt service, and this is how we do that:

Back to the command line in our lxc container. Edit this file:

nano /etc/nginx/conf.d/onlyoffice-documentserver.conf

Delete everything there and change it to the following (changing your domain name accordingly):

include /etc/nginx/includes/onlyoffice-http.conf;

server {

listen 0.0.0.0:80;

listen [::]:80 default_server;

server_name onlyoffice.mybusiness.com;

server_tokens off;

include /etc/nginx/includes/onlyoffice-documentserver-*.conf;

location ~ /.well-known/acme-challenge {

root /var/www/onlyoffice/;

allow all;

}

}

Save and quit the editor. Then exeute:

systemctl reload nginx

apt install letsencrypt

And then this, changing the email address and domain name to yours:

letsencrypt certonly --webroot --agree-tos --email [email protected] -d onlyoffice.mybusiness.com -w /var/www/onlyoffice/

Now, we have to re-edit the nginx file”

nano /etc/nginx/conf.d/onlyoffice-documentserver.conf

…and replace the contents with the text below, changing all the bold items to your specific credentials:

include /etc/nginx/includes/onlyoffice-http.conf;

## Normal HTTP host

server {

listen 0.0.0.0:80;

listen [::]:80 default_server;

server_name onlyoffice.mybusiness.com;

server_tokens off;

## Redirects all traffic to the HTTPS host

root /nowhere; ## root doesn't have to be a valid path since we are redirecting

rewrite ^ https://$host$request_uri? permanent;

}

#HTTP host for internal services

server {

listen 127.0.0.1:80;

listen [::1]:80;

server_name localhost;

server_tokens off;

include /etc/nginx/includes/onlyoffice-documentserver-common.conf;

include /etc/nginx/includes/onlyoffice-documentserver-docservice.conf;

}

## HTTPS host

server {

listen 0.0.0.0:443 ssl;

listen [::]:443 ssl default_server;

server_name onlyoffice.mybusiness.com;

server_tokens off;

root /usr/share/nginx/html;

ssl_certificate /etc/letsencrypt/live/onlyoffice.mybusiness.com/fullchain.pem;

ssl_certificate_key /etc/letsencrypt/live/onlyoffice.mybusiness.com/privkey.pem;

# modern configuration. tweak to your needs.

ssl_protocols TLSv1.2;

ssl_ciphers 'ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-SHA384:ECDHE-RSA-AES256-SHA384:ECDHE-ECDSA-AES128-SHA256:ECDHE-RSA-AES128-SHA256';

ssl_prefer_server_ciphers on;

# HSTS (ngx_http_headers_module is required) (15768000 seconds = 6 months)

add_header Strict-Transport-Security max-age=15768000;

ssl_session_cache builtin:1000 shared:SSL:10m;

# add_header X-Frame-Options SAMEORIGIN;

add_header X-Content-Type-Options nosniff;

# ssl_stapling on;

# ssl_stapling_verify on;

# ssl_trusted_certificate /etc/nginx/ssl/stapling.trusted.crt;

# resolver 208.67.222.222 208.67.222.220 valid=300s; # Can change to your DNS resolver if desired

# resolver_timeout 10s;

## [Optional] Generate a stronger DHE parameter:

## cd /etc/ssl/certs

## sudo openssl dhparam -out dhparam.pem 4096

##

#ssl_dhparam {{SSL_DHPARAM_PATH}};

location ~ /.well-known/acme-challenge {

root /var/www/onlyoffice/;

allow all;

}

include /etc/nginx/includes/onlyoffice-documentserver-*.conf;

}

Save the file, then reload nginx:

systemctl reload nginx

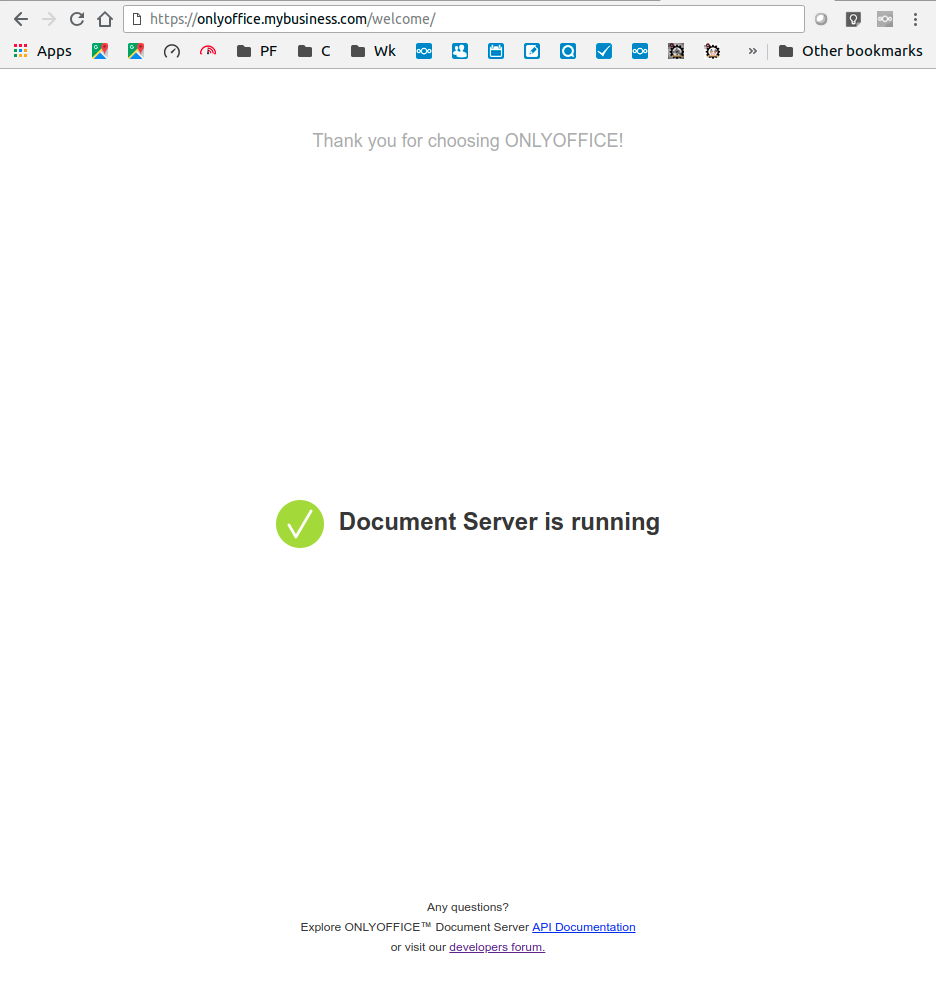

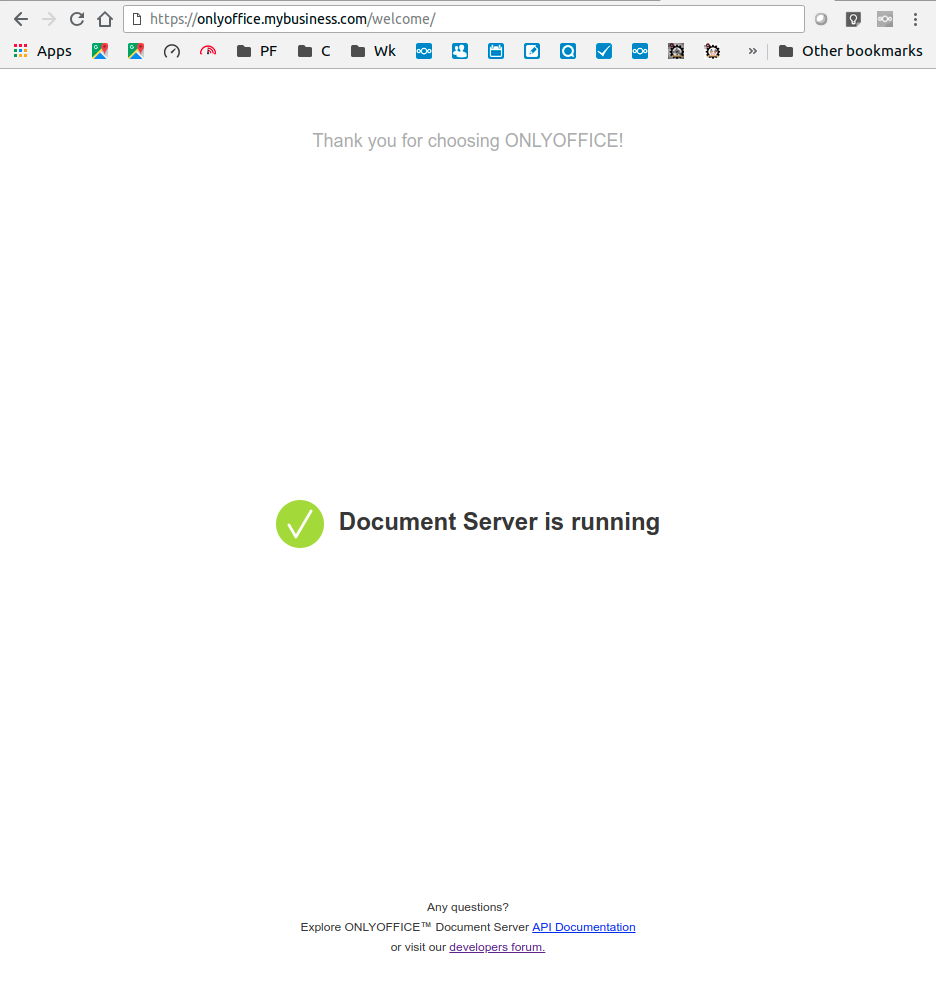

Navigate back to your web page onlyoffice.mybusiness.com and you should get the following now:

And if you do indeed see that screen then you now have a fully operational self-hosted OnlyOffice document server.

If you use these instructions, please let us know how it goes. In a future article, we will show you how to update the container from the snapshot we created earlier.

In our quest to migrate away from the relentlessly privacy-mining Microsoft products, we have discovered

In our quest to migrate away from the relentlessly privacy-mining Microsoft products, we have discovered